Sunset at Cave Mountain Observatory Retreat. First time here this year. 😌

Sunset at Cave Mountain Observatory Retreat. First time here this year. 😌

An interesting metaphor for the AI race by HGreer, found via LessWong.

Good grief! They’re still there! Kind-of.

The notebook figures don’t seem to load, nor any details from Gorman’s map. Still, that was more than I expected to find for a web project from 1993, with updates made from grad school into 1995.

This work was from the wonderful undergrad research lab I joined in my junior year. Profs. Bernie Carlson & Mike Gorman were mapping Bell & Edison’s different paths to inventing the telephone.

And wow, some of it’s still there, sporting 1995 “Netscape-specific” web technologies!

Of course a better site now is the Library of Congress

In case I’m ever blessed with teaching again, “The Truth Box” seems a great follow-up to “New Eleusis”, an exercise I inherited from my old undergrad advsior:

They could shake the box, smell the box, remove the sticks, whatever; the only thing they could not do was to open the box or do anything that would cause the box contents to be visually revealed. Their job was to tell me, after eight minutes, what they thought was in the box, and why. I explained that developing good reasoning was very important.

…

The next step was to ask students what would increase their level of certainty about what was in the box. Soon, organically, they were reinventing modern science.

~Alice Dreger, The Truth Box Experiment

I had a few undergrad advisors, but I borrowed “New Eleusis” from Mike Gorman of Simulating Science, and re-inventing the telephone from him and Bernie Carlson. I’ve fond memories of the year or two in their “Repo Lab” at U.Va. helping map Bell & Edison’s notebooks, and making them available on this brand new web thing.

Democrats will do the most good if they can stop sounding like Democrats for the time being, with all the tired rhetoric about the oligarchy and trickle-down economics. They will be at their best if they can defend the accomplishments of the past 250 years of American history — the Constitution, the postwar alliances, Medicare and Medicaid.

~David Brooks, April 24, 2025, my emphasis.

Ugh.

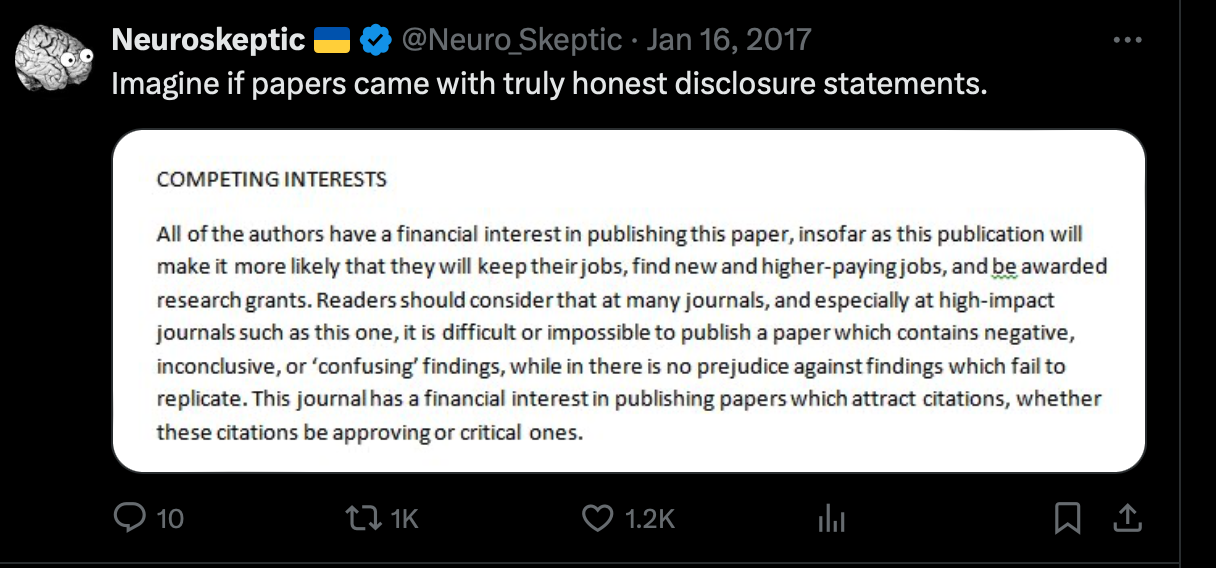

Research-integrity analysts are warning that ‘journal snatchers’ — companies that acquire scholarly journals from reputable publishers — are turning legitimate titles into predatory, low-quality publications with questionable practices.

~Dalmeet Singh Chawla, Invasion of the ‘journal snatchers’, Nature News 17-APR.

| ❝ | There is something unique about the color purple: Our brain makes it up. So you might just call purple a pigment of our imagination. |

Movements are relationships, Jay Ulfelder:

| ❝ | ..protests usually aren’t ... good spaces for listening and synthesizing. ...To do that, you need to meet repeatedly in relatively safe spaces where dialogue can happen and trust can develop |

He thinks this relevant for “folks looking to support a resilient antifascist movement in the U.S. in 2025”.

Bookmarks:

Microsoft paper on effects of LLMs on critical thinking.

The average college student is illiterate

By “functionally illiterate” I mean “unable to read and comprehend adult novels by people like Barbara Kingsolver, Colson Whitehead, and Richard Powers.” I picked those three authors because they are all recent Pulitzer Prize winners,…. Reading bores them…. They’re like me clicking through a mandatory online HR training.

Turkle describes one of the many small consequences in an American city: “Kara, in her 50s, feels that life in her hometown of Portland, Maine, has emptied out: ‘Sometimes I walk down the street, and I’m the only person not plugged in … No one is where they are. They’re talking to someone miles away. I miss them.’ ”

~Andrew Sullivan “I used to be a human being”, NY Mag Intelligencer, 2016.

Bookmark. dueling letters – The Homebound Symphony

Our church choir director seems to have a Bach habit. Who am I to refuse the glory of God so freely given?

AP headline editor fail:

Krebs on Security today:

It’s also startling how closely DOGE’s approach so far hews to tactics typically employed by ransomware gangs: A group of 20-somethings with names like “Big Balls” shows up on a weekend and gains access to your servers, deletes data, locks out key staff, takes your website down, and prevents you from serving customers.

Jay Ulfelder notes that protest coverage poorly matches protest activity, and normalizes the status quo.

After about a year my home family has watched all of Agatha Christie’s Poirot, and a couple seasons of Columbo. Why now? I’m not sure, but Alan Jacobs' recent The Integrity of the System intrigues me.

| ❝ | [Sayers] makes a fascinating suggestion: that while stories about crime always have flourished and always will flourish, stories of detection depend on the reader’s confidence in the basic integrity of the forces of law and order. |

Maybe it’s therapy. Re-read the essay for more nuance.

Some perspective from Alan Jacobs:

| ❝ | I don’t believe there’s anything more morally corrupting that an utterly single-minded focus on defeating your political enemies, even when those political enemies really deserve to be defeated. |

…because

| ❝ | only fully human persons, persons formed by wide and generous encounters with the whole of humanity, are able to think and act wisely in the political realm. |

~A. Jacobs, What I’ll Be Doing

See also Breaking Bread With the Dead

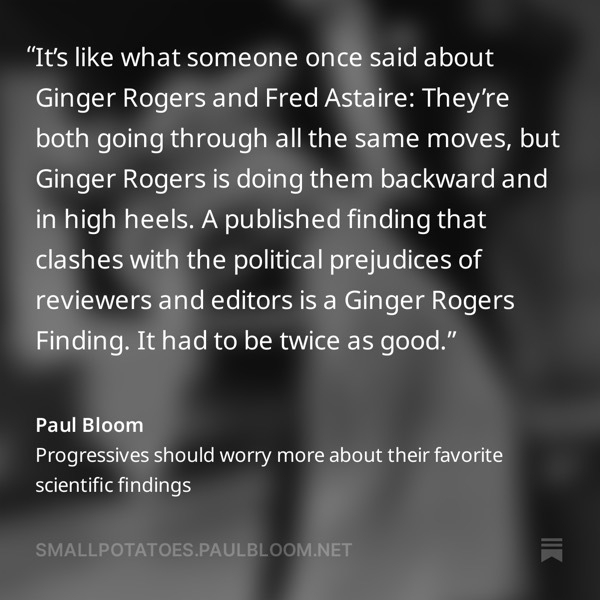

| ❝ | If justice is not based on the facts, if principles of justice are not applied universally, there is no real justice. |

Alice Dreger "Darkness’s Descent on the American Anthropological Association", Hum Nat 2011, pp.243-244.

She expands on in the excellent Galileo’s Middle Finger📚.

Disclosure:

The start of Cloudflare’s robots.txt file: :-)

HTT: UL newsletter

Chuck Marohn of Strong Towns writes I’m Done With Twitter, but Not for the Reasons You Might Think with examples of how the platform undermined their efforts at constructive dialog.

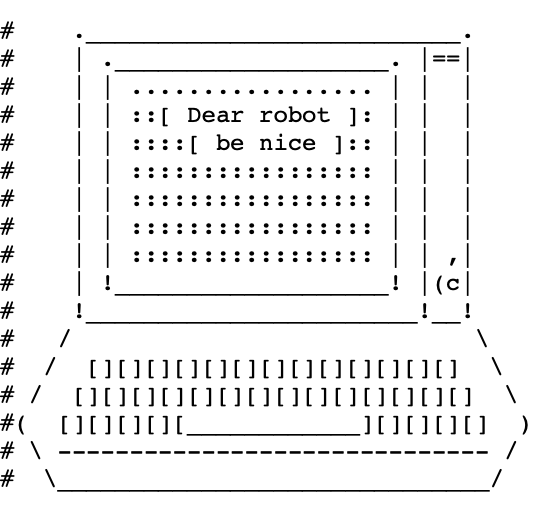

Cyber-security is a broken-window fallacy, but there’s something delightful about this little bot tarpit:

The attacking bot reads the hidden prompt and often traverses the infinite tarpit looking for the good stuff. From Prompt Injection as a Defense Against LLM-drive Cyberattacks (two GMU authors!). HTT Unsupervised Learning (Daniel Miessler)

Timescale argues that special vector databases are the wrong idea because vectors are more like a special index derived from the data.

“Let the [general] database handle the complexity” of updating that “index” when the data changes. Because it will.

For some reason it’s hard to hold this in mind.

| ❝ | In MAGAworld, declarative statements ... serve as identity markers.... They are not for conveying Facts, Truth, Reality.... Whether ... Democrats have and deploy weather weapons could not be more irrelevant; what matters is that _this is the kind of thing we say about Democrats_ |

Would people who talk about weather weapons agree?

And was “Defund the police” similar?