Linda Fallacy?

Proposed Constitutional Amendments (VA)

QUESTION 2:

Should an automobile or pickup truck that is owned and used primarily by or for a veteran….

Are pickup trucks no longer automobiles? Or is veterans+pickups more salient?

Linda Fallacy?

QUESTION 2:

Should an automobile or pickup truck that is owned and used primarily by or for a veteran….

Are pickup trucks no longer automobiles? Or is veterans+pickups more salient?

Vox’s Kelsey Piper discusses the replication crisis in “Future Perfect" this week, elaborating on Alvaro de Menard’s (probably a nom-de-blog) recent post What’s wrong with social science and how to fix it…, in the context of other Vox coverage of replication and science.

It’s a good piece. If you’ve read Menard you won’t learn a lot, but it’s got the Vox flair and reaches a wider audience. It also adds some more context, including previous Vox articles.

The problem (summarizing Menard):

If scientists are pretty good at predicting whether a paper replicates, how can it be the case that they are as likely to cite a bad paper as a good one? Menard theorizes that many scientists don’t thoroughly check — or even read — papers once published, expecting that if they’re peer-reviewed, they’re fine. Bad papers are published by a peer-review process that is not adequate to catch them — and once they’re published, they are not penalized for being bad papers.

The problem (from a 2016 Vox piece):

We heard back from 270 scientists all over the world, including graduate students, senior professors, laboratory heads, and Fields Medalists. They told us that, in a variety of ways, their careers are being hijacked by perverse incentives. The result is bad science.

The real problem is the culture of science.

Ode to our forecasters: What a Year! 54K surveys, 42K trades, 3K claims; Dedicated ‘casters, belief shift from priors, SSR looks sound. Replications TBD, but crosscheck hints good accuracy.

@replicationmarkets #openscience #reproducibility #replication

I reviewed the Watanabe manuscript. I think it’s worth a follow-up. Am I missing something? #reproducibility #openscience #epitwitter

This sounds fantastic. Why haven’t I done this?

Karl Popper on Social Media

As for Adler, I was much impressed by a personal experience. Once, in 1919, 1 reported to him a case which to me did not seem particularly Adlerian, but which he found no difficulty in analysing in terms of his theory of inferiority feelings, although he had not even seen the child. Slightly shocked, I asked him how he could be so sure. “Because of my thousandfold experience,” he replied; whereupon I could not help saying: “And with this new case, I suppose, your experience has become thousand-and-one-fold.”

~ “Conjectures and Refutations”

Saturn. First attempt, just holding my iphone near the eyepiece and clicking photos until one finally aligned.

Science is getting harder to read. More jargon, more acronyms, worse writing. All those reduce citations.

79% of acronyms are used fewer than 10 times, ever. So cut back.

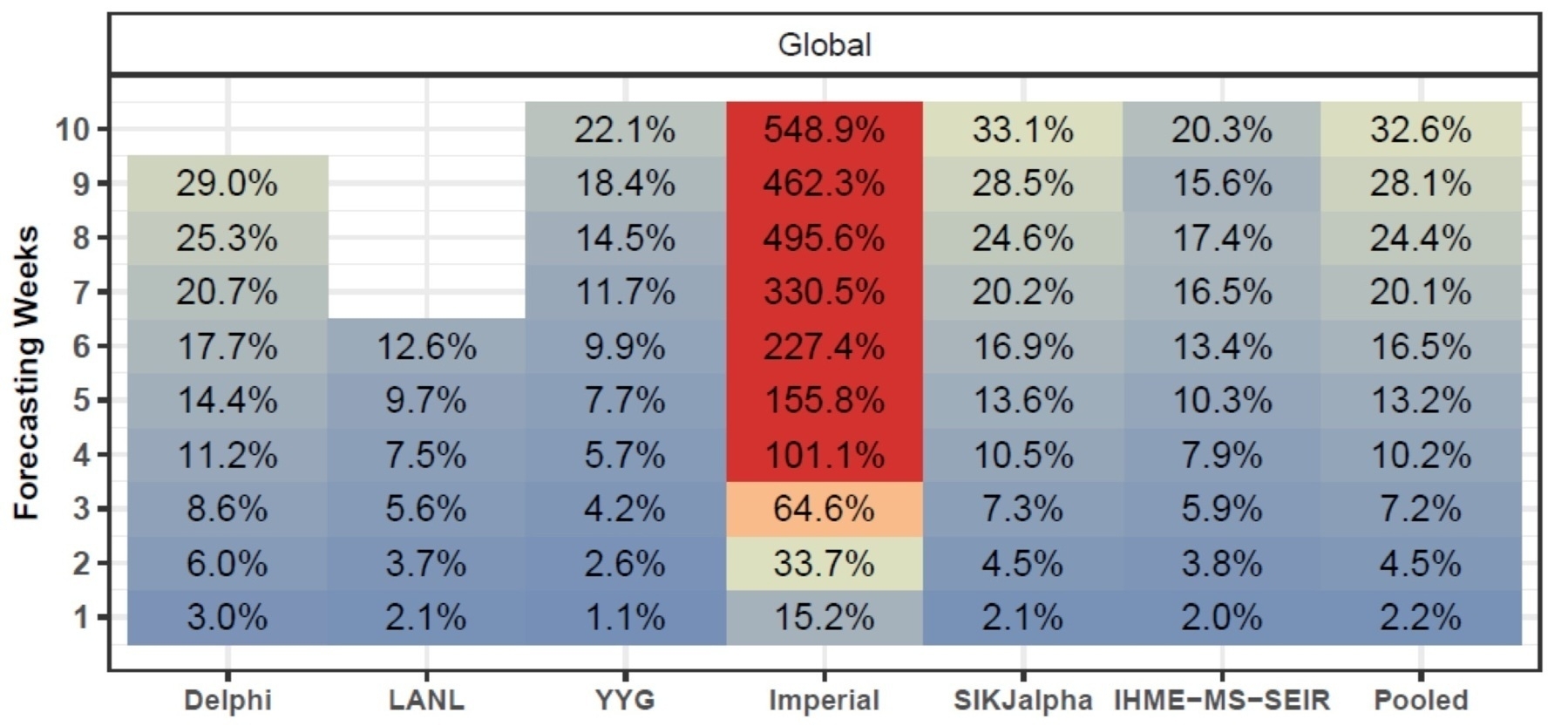

New PDF compares accuracy of 7 key #COVID-19 models. On mean absolute % error for total deaths (shown), YYG and IHME’s Mortality Spline (both w/SEIR) did well; Imperial’s SEIR way overestimated. On predicted peak timing, IHME’s simple Curve Fit won - huh. See paper for caveats.

Of the various kinds of misinformation during this pandemic, at least I was spared relatives touting the cow urine cure.

Piece in Meedan on coronavirus misinfo in India.

Short well-written plea to actually read the article.

I’ve been trying Readup, which is like a small community that can’t comment unless they use Safari’s Reader-mode and finish the article.

By William Deresiewicz | March 1, 2010

Reminders for my increasingly distracted brain.

If you want others to follow, learn to be alone with your thoughts

I find for myself that my first thought is never my best thought.

Now that’s the third time I’ve used that word, concentrating. Concentrating, focusing. You can just as easily consider this lecture to be about concentration as about solitude. Think about what the word means. It means gathering yourself together into a single point rather than letting yourself be dispersed everywhere into a cloud of electronic and social input. It seems to me that Facebook and Twitter and YouTube—and just so you don’t think this is a generational thing, TV and radio and magazines and even newspapers, too—are all ultimately just an elaborate excuse to run away from yourself. To avoid the difficult and troubling questions that being human throws in your way. Am I doing the right thing with my life? Do I believe the things I was taught as a child? What do the words I live by—words like duty, honor, and country—really mean? Am I happy?

In my day, philosophers encountered birth control pills at least twice during training. First and easiest, your account of causation could not simply say birth control pills reduce the chance of pregnancy - they have no effect on men’s chance. Second, and trickier, how to handle their effect on blood clots? By simulating pregnancy, they increase the risk of clots. But by preventing pregnancy they decrease it. Once, you could publish papers about that.

Well, here they are again. MIT Press has a new journal devoted entirely to rapid reviews of COVID-19 papers. (Hopkins does too.) And by way of introducing them, their most recent reviews.

This study on estrogen got two strong reviews. Women are less susceptible than men to C19.* Estrogen is one possibility. The authors confirm that post-menopausal women had worse symptoms, but then age is an even stronger risk factor.

However, thanks to medicine apparently invented to confound philosophers, it’s possible to separate estrogen from age. Among pre-menopausal women, those on oral contraceptives appear to have fared better. (This was not clear for older women on hormone replacement.) The study has some limits - it’s not a randomized trial.

But once again causal analysis of pills and blood clots is relevant, and here the pill itself provides a quasi-intervention. Causal payback, baby.

——

In a recent Nature essay urging pre-registering replications, Brian Nosek and Tim Errington note:

Conducting a replication demands a theoretical commitment to the features that matter.

That draws on their paper What is a replication? and Nosek’s earlier UQ talk of the same name arguing that a replication is a test with “no prior reason to expect a different outcome.”

Importantly, it’s not about procedure. I wish I’d thought of that, because it’s obvious after it’s pointed out. Unless you are offering a case study, you should want your result to replicate when there are differences in procedure.

But psychology is a complex domain with weak theory. It’s hard to know what will matter. There is no prior expectation that the well-established Weber-Fechner law would fail among the Kalahri – but it would be interesting if it did. The well-established Müller-Lyer illusion does seem to fade in some cultures. That requires different explanations.

Back to the Nature essay:

What, then, constitutes a theoretical commitment? Here’s an idea from economists: a theoretical commitment is something you’re willing to bet on. If researchers are willing to bet on a replication with wide variation in experimental details, that indicates their confidence that a phenomenon is generalizable and robust. … If they cannot suggest any design that they would bet on, perhaps they don’t even believe that the original finding is replicable.

This has the added virtue of encouraging dialogue with the original authors rather than drive-by refutations. And by pre-registering, you both declare that before you saw the results, this seemed a reasonable test. Perhaps that will help you revise beliefs given the results, and suggest productive new tests.

What is the purpose of retraction? Clearly it’s appropriate in cases of fraud or negligence. But what of the routine error of novel science? Surely this is defensible:

I agree we were wrong and an unpublished specimen will eventually prove it, but I disagree that a retraction was the best way to handle the situation.

Taken from RetractionWatch.

[In a piece about about masks] (https://somethingstillbugsme.substack.com/p/many-people-say-that-it-is-patriotic), reporter Cat Ferguson blogs at @somethingstillbugsme@substack.com:

To any journalists reading this who cover COVID-19 science: please keep an eye on Retraction Watch’s list of retracted or withdrawn papers. If something seems too good to be true, push on it. Whether it’s premature to say the retraction rate is exceptionally high for COVID-19 papers, it’s worth it to be overly skeptical….

For COVID forecasting, remember the superforecasters at Good Judgment. Currently placing US deaths by March in 200K - 1.1M, with 3:2 for above 350K, up from 1:1 on July 11.

“Foolish demon, it did not have to be so.” But Taraka was no more. ~R. Zelazny

Alan Jacobs with a cautionary tale about assuming news is representative of reality, and remembering to sanity-check our answers. blog.ayjay.org/proportio…

Worried about #COVID, I did not join #BLM protests. Even if outdoors + masks, marches bunch up, & there are only so many restrooms. It’s been an open question what effect they had. NCRC has has reviewed a 1-JUN NBER study: @ county level, seems no. Can’t address individ.

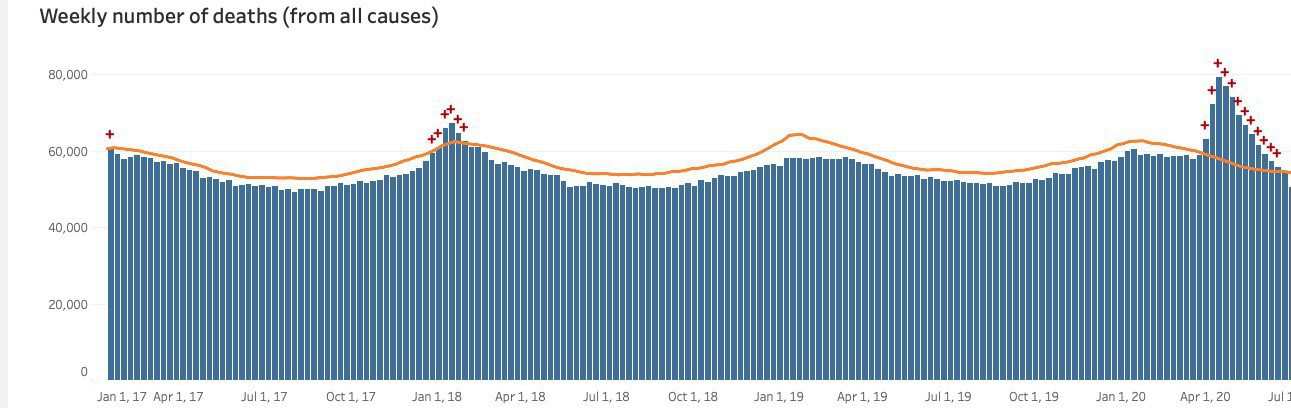

Good news: Despite case rise, excess deaths have been dropping, nearly back to 100% after high 142%. Bad news: @epiellie thinks it’s just lag: early test ➛ more lead time. Cases up 3-4 wks ago, ICU 2-3, deaths up last period. Q: why do ensemble models expect steady death rate?

I saw my old and much-loved Monash colleague #”ChrisWallace” https://en.wikipedia.org/wiki/Chris_Wallace_(computer_scientist) trending on Twitter. Alas, it turns out it’s just some reporter with a 5-second clip.

How about #WallaceTreeMultiplier, #MML, #ArrowOfTime, #SILIAC.

Bob Horn sent me this news in Nature

NEWS

16 JULY 2020 Open-access Plan S to allow publishing in any journal Funders will override policies of subscription journals that don’t let scientists share accepted manuscripts under open licence. Richard Van Noorden

This seems good news, unless you’re a journal.

I expect journals to do a good quality control, and top journals to do top quality control. At minimum good review and gatekeeping (they are failing here, but assume that is fixed separately). But also, production. Most scientists can neither write nor draw, and I want journals to minimize typos and maximize production quality. If I want to struggle with scrawl, I’ll go for preprints: it’s fair game there.

So, if you (the journal) can’t charge me for access, and I still expect high quality, you need to charge up front. The obvious candidates are the authors and funders. The going rate right now seems to be around $2000 per article, which is a non-starter for authors. Authors of course want to fix this by getting the funders to pay, but that money comes from somewhere.

Here’s some uninformed back-of-the-envelope saying that will be hard.

Looking good so far!

So… seems one webslinger needs to be able to manage about 10 journal websites. Is that doable? How well do the big publishers scale? Do they get super efficient, or fall prey to Parkinson’s law?

Alternative: societies / funders have to subsidize the journals as necessary road infrastructure. That might amount to half the costs. How much before they effectively insulate the new journals from accountability to quality control… again?

Dr. Rachel Thomas writes,

When we think about AI, we need to think about complicated real-world systems [because] decision-making happens within complicated real-world systems.

For example, bail/bond algorithms live in this world:

for public defenders to meet with defendants at Rikers Island, where many pre-trial detainees in NYC who can’t afford bail are held, involves a bus ride that is two hours each way and they then only get 30 minutes to see the defendant, assuming the guards are on time (which is not always the case)

Designed or not, this system leads innocents to plead guilty so they can leave jail faster than if they waited for a trial. I hope that was not the point.

Happily I don’t work bail/bond algorithms, but one decision tree is much like another. ”We do things right” means I need to ask more about decision context. We know decision theory - our customers don’t. Decisions should weigh the costs of false positives versus false negatives. It’s tempting to hand them the maximized ROC curve and make threshold choice Someone Else’s Problem. But Someone Else often accepts the default.

False positives abound in cyber-security. The lesser evil is being ignored like some nervous “check engine” light. The greater is being too easily believed. We can detect anomalies - but usually the customer has to investigate.

We can help by providing context. Does the system report confidence? Does it simply say “I don’t know?" when appropriate? Do we know the relative costs of misses and false alarms? Can the customer adjust those for the situation?

The announcement of RR:C19 seems a critical step forward. Similar to Hopkins' Novel Coronavirus Research Compendium. Both are mentioned in Begley’s article.

So.. would it help to add prediction markets on replication, publication, citations?

Unsurprisingly, popular media is more popular:

A new study finds that “peer-reviewed scientific publications receiving more attention in non-scientific media are more likely to be cited than scientific publications receiving less popular media attention.”